@

FernetMenta

Thank you for the link to the EDID discussion. Heh-heh,

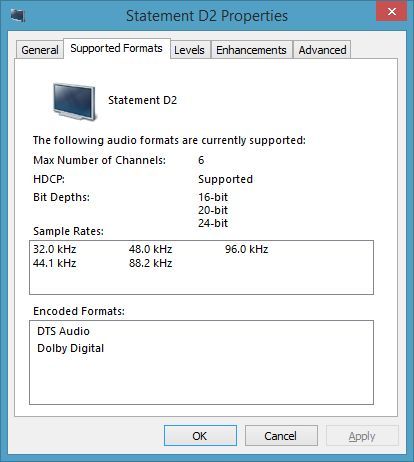

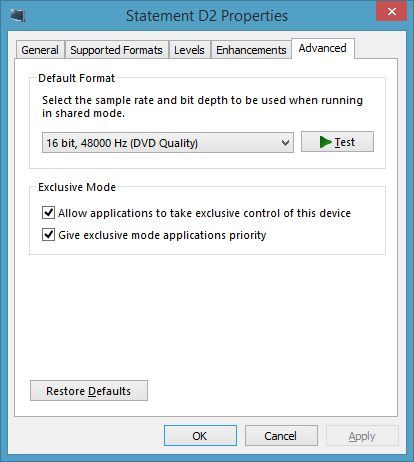

now I remember stumbling upon that thread when searching for a way to override the video info coming from my AVR (Anthem D2).

When D2 was first connected, the option to set xbmc's resolution for 1920x1080@60p disappeared from the dropdown list (video worked fine with TV connected directly), and the culprit identified was "funky EDID" coming from the D2. I found an example somewhere for Intel, and cobbled together this xorg.conf file:

Code:

Section "Device"

Identifier "Device0"

Driver "intel"

VendorName "INTEL Corporation"

EndSection

Section "Screen"

Identifier "Screen0"

Device "Device0"

Monitor "HDMI2"

DefaultDepth 24

SubSection "Display"

Depth 24

Modes "[email protected]" "1920x1080@24p" "1920x1080@60p"

EndSubSection

EndSection

Section "Monitor"

Identifier "HDMI2"

HorizSync 14.0 - 70.0

VertRefresh 24.0 - 62.0

Option "DPMS" "true"

Modeline "1920x1080@24p" 74.230 1920 2560 2604 2752 1080 1084 1089 1125 +hsync +vsync

Modeline "1920x1080@50p" 148.500 1920 2448 2492 2640 1080 1084 1089 1125 +hsync +vsync

Modeline "[email protected]" 148.352 1920 1960 2016 2200 1080 1082 1088 1125 +hsync +vsync

Modeline "1920x1080@60p" 148.500 1920 2008 2056 2200 1080 1084 1089 1125 +hsync +vsync

EndSection

Section "Extensions"

# fixes tearing

Option "Composite" "Disable"

EndSection

It

seems to cure the output resolution roadblock, but I'm open to suggestions for improvement. Given that the D2 has an excellent Gennum GF9350 VXP image processor, I assume it would announce its capabilities correctly, but so far it's not that elegant. Upgrading to Gotham nightlies didn't help the res selection limitation, so the override remains in my system - I hope it's not impacting the audio data received through EDID.

But that's video stuff, and we're talking about

getting four audio channels to play, as four channels.

Reading through that discussion, the similarity to my issue struck me, and when chewitt stated (in

this post):

Quote:The major revolution in Eden was that AudioEngine stopped blindly trusting filesystem config files and started evaluating how hardware was presented in the OS so that autoconfiguration worked properly. I'd wager an educated guess that after 2.99.2 the XBMC devs fixed bugs so AudioEngine correctly detects the incorrect/bad EDID and configures itself appropriately - over time we have seen many AVR's and TV's with bad EDID and while current AE is not perfect it's stable and rarely to blame for this kind of thing.

...a new angle came to mind: Is it possible xbmc makes the wrong output choice - based on the info sent (or

not being sent) by my D2 ?

You configure 3.0 as 5.1 due to ALSA mapping requirements, OK. Is there a check in the ALSA driver stage for compatible formats at the receiving end - I presume so - and here

may be a point of failure.

I know for a fact the D2 does not support 4-CH PCM (FL,FR,BL,BR), and yet xbmc somehow decides this is the correct format for HDMI output. ALSA may like FL,FR,BL,BR - but Anthem doesn't see it (...and the D2 defaults to the first two channels, FL & FR). In this case, should xbmc choose 5.1 format ?

Clearly, I need to corral the EDID coming from the D2, then answers can be found. I read the

wiki entry on Creating & Using EDID.BIN, but it looks like a procedure I can't use on OE (maybe I'm wrong). I hope somebody can direct me how to extract the EDID.BIN being used by my Intel Sandy Bridge NUC, under OpenELEC.

Quote:AFAIK you need a Linux machine for building OpenElec.

Hmmmm... no Linux machine here. Uh-oh.

Quote:Do you have a chance to test on Windows? This way we could narrow it down somehow.

I appreciate alternative the suggestion. Windows XP desktop here (Luddite - yes, I know) and no HDMI on that machine. Double uh-oh.

Thanks again, for playing

Grant